Nevertheless, the opportunities for social business are growing, and nowhere do I see a greater need than in supply chain management, specifically planning systems.

Most supply chain planning (SCP) systems today are not social. Rather, they are oriented around the job of an individual planner, who works with user interface that strongly resembles an Excel spreadsheet. Rows show demand and supply, with columns indicating time periods, left to right, marching into the future. Highlighting is used to indicate periods where there are shortages of resources, whether material, capacity, or other elements of production. Exception messages alert the planner to take action. Except for better graphics, the user experience is not much different from that of MRP systems that I worked with and taught in the 1970s and 80s.

What’s Wrong with Spreadsheets?

The spreadsheet paradigm has survived for decades because it does have its strengths. First, it is familiar to anyone trained in principles of supply chain management. Second, it allows a lot of information to be conveyed on a single page.The issue comes in the “take action” part of the planner’s job, especially when an action affects other participants in the supply chain, such as customers, suppliers, or sub-contractors. For example, a planner may be trying to resolve an issue with a late order. Taking action in this case might mean paying premium freight to expedite a supplier order, rescheduling production, shorting another customer, scheduling overtime, or any number of exceptional actions. The problem is that such decisions can rarely be made by a single individual. They require collaboration and approval by various other players inside and outside the organization. At this point, the planner turns from the SCP system and picks up the telephone, sends an email, or convenes a meeting.

Traditional SCP systems are good for identifying the problem, and they are good for recording the decision. But they are not good as a platform for collaboration to discuss the problem and to make a decision. Supply chain collaboration is not simply a matter of “getting approval.” These are content-rich collaborations, often requiring analysis of what-if scenarios and tradeoffs between competing metrics and objectives.

In other words, today’s SCP systems are systems of transactions, not “systems of engagement” (to use the term coined by Geoffrey Moore).

What Does Social SCP Look Like?

I got a little insight into what the next generation of SCP systems might look like, when I attended the Kinaxis user conference last month in Scottsdale, AZ.By way of background, Kinaxis provides a supply chain planning system, dubbed Rapid Response. The company was founded in the early 1990s and has been through several name changes, most recently from Webplan to Kinaxis in 2005. Kinaxis was developing in-memory software long before in-memory became an industry buzzword. The firm also moved to a cloud delivery model in the late 1990s, around the same time that Salesforce.com and NetSuite were starting out. Kinaxis has been successful selling into large companies with complex supply chains and competes directly against SAP, Oracle, as well as other best-of-breed specialists that vie for this market.

During the half day of analyst briefings, Kinaxis executives put up some screen shots of a new user interface that the company is considering. Although they did not use the word “social” to describe their objectives, I immediately saw the embedded social aspects of the new user interface.

- Automatic team selection. In a large organization, it is not always readily apparent who needs to be involved in a certain supply chain decision. Knowing who should be involved on the customer and supplier side can be even more difficult. The prototype role-based dashboard automatically tells the planner or other user who needs to be involved—inside and outside the organization—in deciding each proposed action.

- Business intelligence in context. For each supply chain decision needed, the demo UI allows each participant to see the impact of the proposed action on the business and on other people. So, there’s no need to leave the application to look up relevant information. In this way, the system promotes cross-functional alignment and consensus.

- System of engagement. The new UI does more than just record the transaction. It captures team voting, comments, and assumptions, which are traditionally done outside the formal system.

- Cross-device access. No more waiting until you get back to your desk. The new UI automatically reformats itself across desktop, tablet, and smart phone displays, allowing access anywhere, any time. Going beyond the Apple/Google operating systems that many vendors support, Kinaxis also supports Blackberry and Microsoft mobile platforms.

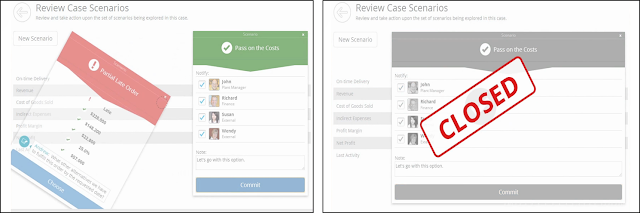

- Light gamification. When the team arrives at a decision for a given case, the alternative scenarios fall off the display, like sticky notes falling from a whiteboard, and the word “Closed” is stamped on the case—a little visual reward for resolving the case. Though I didn’t see it in the demonstration, I can envision a leader board for each functional group, showing number of cases resolved and other metrics that the organization deems important.

Embedded Collaboration vs. a General Purpose Tool

To be fair, Kinaxis is not the first to seek application of social business principles to the supply chain. However, most attempts thus far have involved general purpose tools, such as Microsoft’s Sharepoint or Yammer, or Salesforce.com’s Chatter to capture collaboration among trading partners. There has also been talk about the use of social media sites such as Twitter to monitor or rapidly communicate events that may affect availability of material, for example. But these just scratch the surface of what is possible.But, using a general purpose social tool requires the planner to use one system for planning and another for collaboration, with little or no connection between them. So, when supply chain professionals are in the planning system, they can’t collaborate, and when they are in the collaboration system, they can’t plan.

In contrast, the social business capability being considered by Kinaxis is not some general purpose activity feed layered on top of the application. Rather, it is embedded in the application itself. The automated team selection solves a real problem in large complex supply chains. The discussion thread is natively embedded as part of the application and is focused on specific decisions to be made. There are no side discussions about pet cats or who’s bringing what to the company picnic. If those things are important, let them be relegated to Chatter or Yammer and keep the SCP discussion focused on taking supply chain actions.

The prototype coming out of the lab at Kinaxis gives a clear view of what is possible in in putting social business constructs into supply chain planning. It helps that Kinaxis has built a complete SCP solution from top to bottom as a single system, as opposed to building it up from acquired components. With a single in-memory system, Kinaxis can more readily provide all the information at the same time to all participants. There is no cascading of plans sequentially from one level to another: all levels are planned concurrently.

Does this mean that SCP vendors need to give up the spreadsheet paradigm? Not at all. My advice would be for vendors to continue to use the spreadsheet user interface. As I noted, it does have its benefits. But the “social SCP” paradigm needs to be introduced alongside the spreadsheet. In this way, long-time SCP users can continue to work with the interface they have grown up with, and at the same time, be introduced to a different paradigm. User interface changes can be quite unnerving for long-time system users. A parallel approach will make the transition easier.

You can watch the full video of the prototype user interface by clicking the graphic below (free registration required).

Related Posts

Supply Chain Management Delivers Positive ROI DespiteBreakthrough in Material Planning: Demand Driven MRP